The Amazing Potential Of A Requisition System

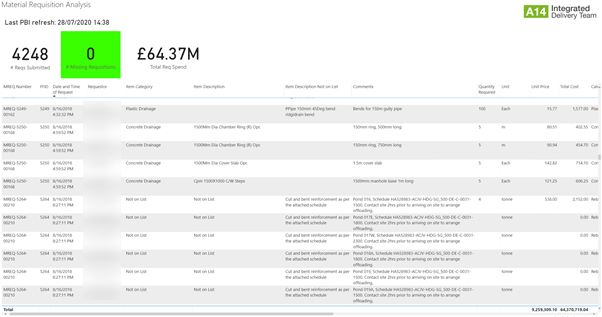

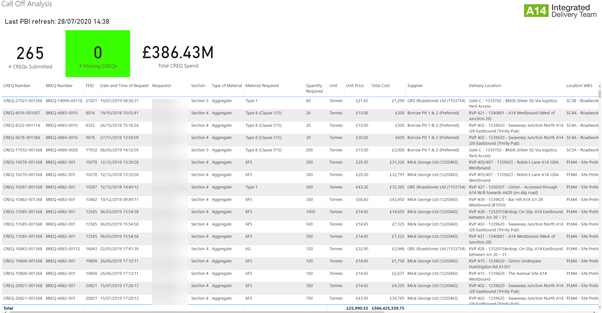

Last week I was doing some work for a client to build a Power BI report so they could analyse data from the requisition system which we had built for them back in 2018. I was putting everything together and out of curiosity I thought I’d put a tile in to calculate total spend across the different systems. It’s for a huge project, been in place for over two years so I thought maybe £100M at a push had gone through the system. Imagine my surprise when it transpired that over £400M of spend spread over 4,500 requisitions and 10,000-line items had gone through the system. That is a lot of money spent in just two years, but it’s also a lot of data which we can leverage for detailed analytics as well as providing a robust record when being audited by clients.

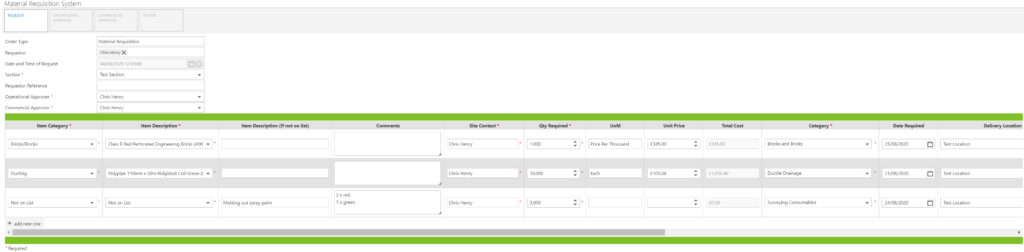

Now, it will be better if I explain how the requisition system came about and what it entails. In a nutshell, we couldn’t say with 100% confidence where materials were being used and who by, leaving the project at risk of disallowed costs. We needed a solution which could track all the requisitions and enable us to know the cost codes being spent against and who was using the materials at site. Thus, the requisition system was created using the FlowForma BPM software which comprised of four separate requisitions. These were; bulk orders, call off orders against an already created bulk order, plant requisitions and material requisitions. We imported the project framework agreement so that prices were shown for some items, which helped drive the right commercial behaviours when requesting items, we had a detailed workflow which had digital approvals and allowed requests to be passed back or rejected if there was insufficient information, and most importantly, all the data was stored in a database for reporting and analysis.

Imagine, you have £400M worth of requisitions stored for you to analyse, and no additional work has to be done when completing the forms, the system automatically stores all data produced. When the system was in place, we could track every line item, which cost code it was charged to, whether it was the principal contractor or a subcontractor using the material and even the design quantity to identify material wastage. We could even track where along the process workflow each requisition was and the time taken between steps, which in turn helped us to identify and remove any blockers in the process. The uptake for the system was brilliant, it was intuitive, effective and a vast improvement on previous systems. Utilising the FlowForma software meant we could put in place a robust workflow with automatic emails, data storage and approvals which were easy to set up and eventually hand over the system to be managed by the procurement team.

I believe that they key reason behind the system’s success was the collaborative teamwork between us building it, the procurement team, the commercial team and the client’s representatives. This ensured that not only was the original issue of tracking requisitions solved, but that the system was also expanded to other types of requisitions, so we realised benefits further than originally thought.

Apart from the system itself, I now want to talk about all that lovely data. We can now give our client confidence that we are managing materials and are able to tell them which materials were bought by whom on any given day, and who used them. All of this is done through Power BI with no need to trawl through paper requisitions which would be a nightmare. But this only represents a fraction of the potential in the data.

Just think of how we could leverage that £400M worth of spend. On a basic level we could compare it with our framework agreements and look at whether we need to renegotiate because we were buying more items that weren’t part of the agreement. On a more advanced level we could start training an AI machine to look at our spend, our programme and the external market and soon be able to track the best times to purchase material when the price is low. We could even calculate whether it is even worth purchasing material in bulk and storing it because prices could rise in the future. But how amazing would this all be if the industry teamed together and all this data was pooled so we could all analyse it. Huge infrastructure projects could put their programmes of work and bills of quantities into the data pool and the machine could be trained to know when a project was going to consume large volumes of materials and if there would be either a shortage or price increase. The machine would then be able to inform projects when to buy materials and maybe even which quantities to purchase to keep the prices down.

So, what seems like a pretty basic system could save projects loads of money which is super important when profit margins are 2% at best. Even just by avoiding disallowed costs, being paper free and allocating costs correctly. A mature system could increase margins even further by informing projects about external market conditions which could affect prices and by telling the project when to buy their materials. As the data pool grows and the system learns more, it’s effectiveness would carry on increasing and more benefits realised by those who use it. Exciting times and I really hope to deploy the requisition system elsewhere to break that milestone of £1 billion spend through it and capture even more of that lovely data!